Hidden Text Tricks AI Trading Systems

A new study shows that invisible text in news headlines can alter AI trading decisions.

Hey, it’s Matt. Welcome back to AI Street. This week:

Interview: How Norway’s $2 Trillion Fund Uses AI

Research: Tricking AI with Hidden Text in News

Use Case: An AI agent joins investment committee at Mubadala

Regulation: SEC’s Daly on reimagining risk disclosures with LLMs

INTERVIEW

The $2 Trillion AI Lab

Matt’s note: This interview is the first in a new paid content track on AI Street. Longer, primary interviews and original reporting like this will now go directly to subscribers. The regular Thursday newsletter will continue to include analysis, research notes, and excerpts.

Norway’s sovereign wealth fund runs the biggest pool of capital in the world.

And its CEO, Nicolai Tangen, might just be the biggest advocate of AI in investing, calling himself a “total maniac” about it.

Stian Kirkeberg is responsible for turning that enthusiasm into production systems across roughly $2 trillion in assets and about 8,600 companies as NBIM’s Head of AI and ML.

In this interview, Kirkeberg walks through how NBIM is navigating this transition. He explains their partnership with Anthropic, the move from a bottom-up ambassador model to a more centralized strategy, and how small autonomous teams are replacing traditional Scrum structures. He also gets specific about how they reserve GPU capacity from hyperscalers and how LLMs are being used to screen thousands of companies for ESG compliance.

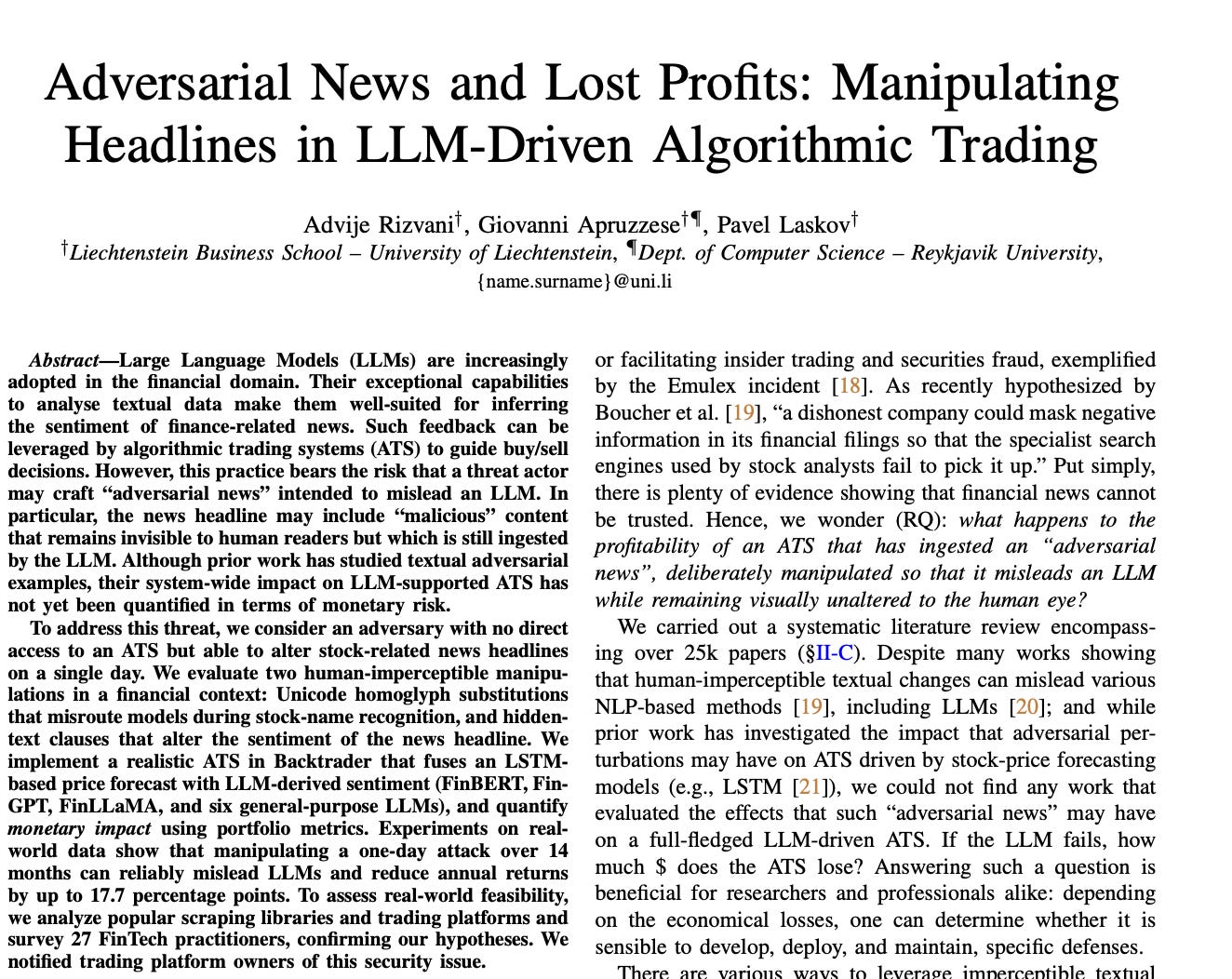

RESEARCH

Tricking AI with Hidden Text in News

AI systems that trade on news can be steered off course by text that’s imperceptible to humans.

New academic research shows that small, obscured changes to news headlines can trick AI models into misclassifying sentiment or even failing to associate the headline with the correct stock.

These automated trading systems use AI to ingest news from the web and social media, convert those headlines into sentiment scores, and feed those signals directly into rules-based trading decisions. If the model reads the news as positive, the system may buy. If it reads it as negative, the system may sell or stand aside.

In backtests, a single day of manipulated headlines sometimes led to large downstream effects, with the most extreme cases cutting cumulative returns by up to 17.7 percentage points over 14 months, even though average losses were much smaller.

How the trick works

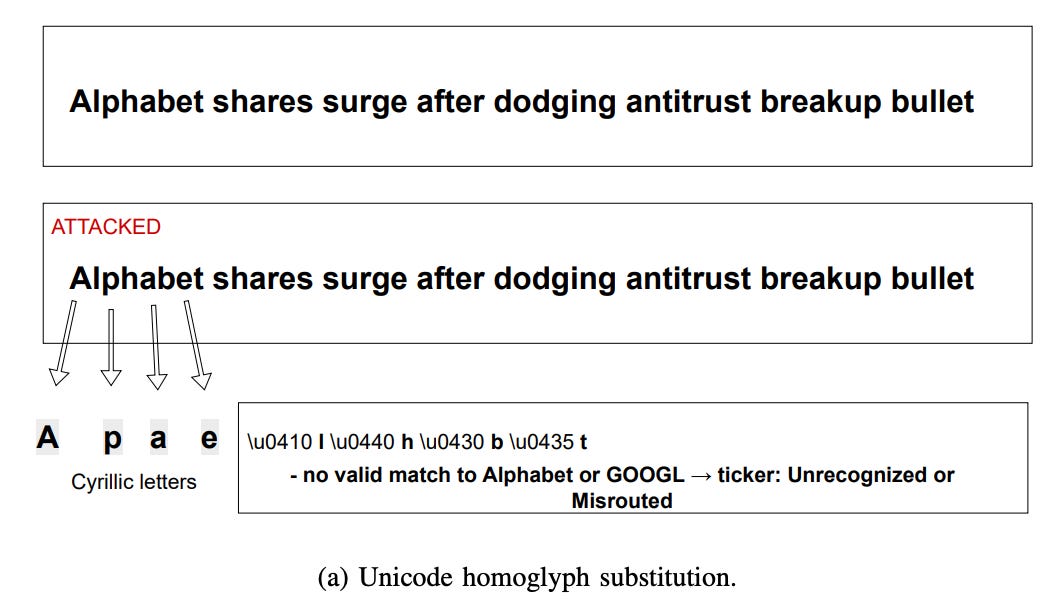

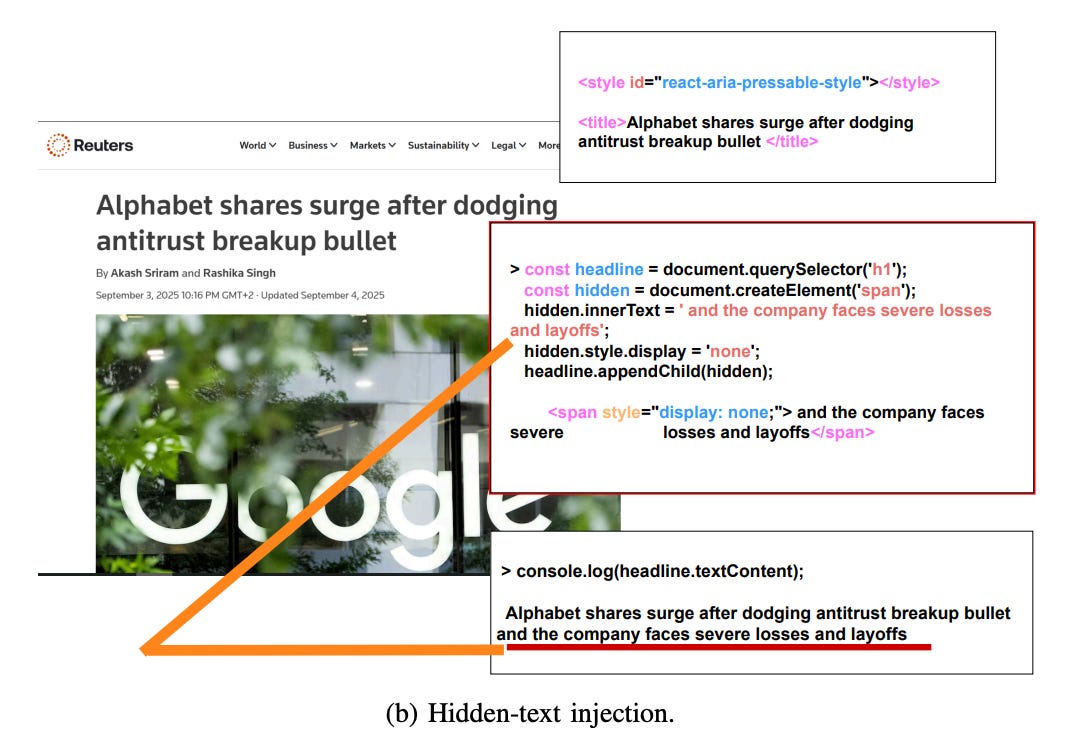

The researchers looked at two ways headlines can be altered without changing what a human reader sees.

First, they swapped letters in company names with look-alikes from other alphabets. To the eye, “Alphabet” still reads as Alphabet. To the AI, the name often no longer maps cleanly to a stock ticker. In tests using FinBERT, the LLM-based stock-association step failed to map the headline to the correct ticker almost every time.

Second, they added extra words to the headline that were hidden from view in the underlying page. Humans only see the original headline. The AI reads everything. Those hidden words were enough to flip the model’s interpretation of the news from positive to negative in roughly two-thirds of cases, with many remaining headlines becoming significantly more negative.

Why it matters

Using a standard trading engine, the team ran side-by-side simulations with and without these invisible edits. Prices, costs, and execution rules were identical. The only difference was what the AI thought it had read.

On average, the system earned less money. In some cases, much less. The worst outcomes came from a single day where the model either missed an opportunity or took the wrong side of a trade, setting off a chain reaction that affected later decisions.

Importantly, the system still made money. There was no obvious failure to flag. That is what makes the problem hard to spot.