AI for Excel has improved dramatically over the last six months, but it still wouldn’t make it as a junior analyst on Wall Street.

The three leading AI providers, OpenAI, Anthropic and Google’s Gemini, have all released updated capabilities for Excel and are reporting higher accuracy on benchmarks.

For example, OpenAI said this month that performance on its internal investment banking benchmark jumped to 87.3% (GPT-5.4 Thinking) from 43.7% (GPT-5).

While these are material improvements, AI still can’t be relied on without supervision. “Mostly right” is not good enough.

A recent benchmark on AI in Excel points to the same issue: performance drops sharply as tasks get more complex.

FinSheet-Bench tests how models handle real-world private equity workbooks with messy layouts, multiple funds, and non-standard formatting. Across 10 models from OpenAI, Google, and Anthropic, the best result came from Gemini 3.1 Pro at 82.4% accuracy, followed closely by GPT-5.2 with reasoning and Claude Opus 4.6 with thinking, both around 80%.

On simple lookups, top models exceed 90% accuracy.

The gap widens further on large, realistic files. On the most complex workbook tested, with 152 companies across eight funds, average accuracy was worse than a coin flip.

One reason: models don’t actually “see” the spreadsheet. They operate on a text-serialized version that strips out layout, formatting, and visual structure.

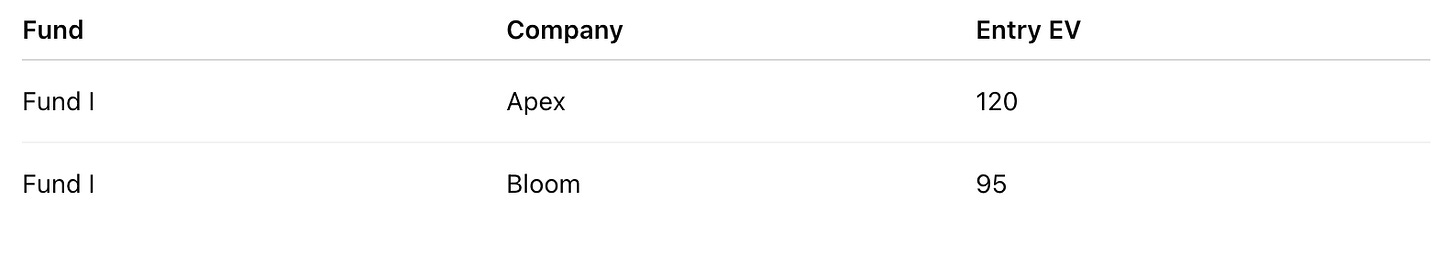

So this:

Becomes this

Spokespeople for OpenAI, Anthropic and Google didn’t respond to requests for comment on FinSheet-Bench’s results.

What I’d like to see from model providers, rather than the latest numbers from internal benchmarks, is basic operating data: real error rates, how often outputs need to be corrected, and what level of reliability users should expect.

ICYMI Research

The problems of AI in Excel echo the broader challenges of AI, namely that if the model has to traverse thousands of pages or dozens of tabs in Excel, accuracy plummets.

Over the last few weeks here, we’ve covered how there’s more of a premium on reliable models rather than the most powerful.