What Actually Makes AI Reliable

Why guardrails, data, and workflow matter more than the model

Hey, it’s Matt. This week in AI on Wall Street:

Analysis: Why the model matters less than the system around it

Research: Why AI struggles with real Wall Street work

Feature: Can We Break Open AI’s Black Box?

News: Stocks drop on AI doomsday prediction, IBM falls most since 2000

ANALYSIS

Why the Model Matters Less Than the System Around It

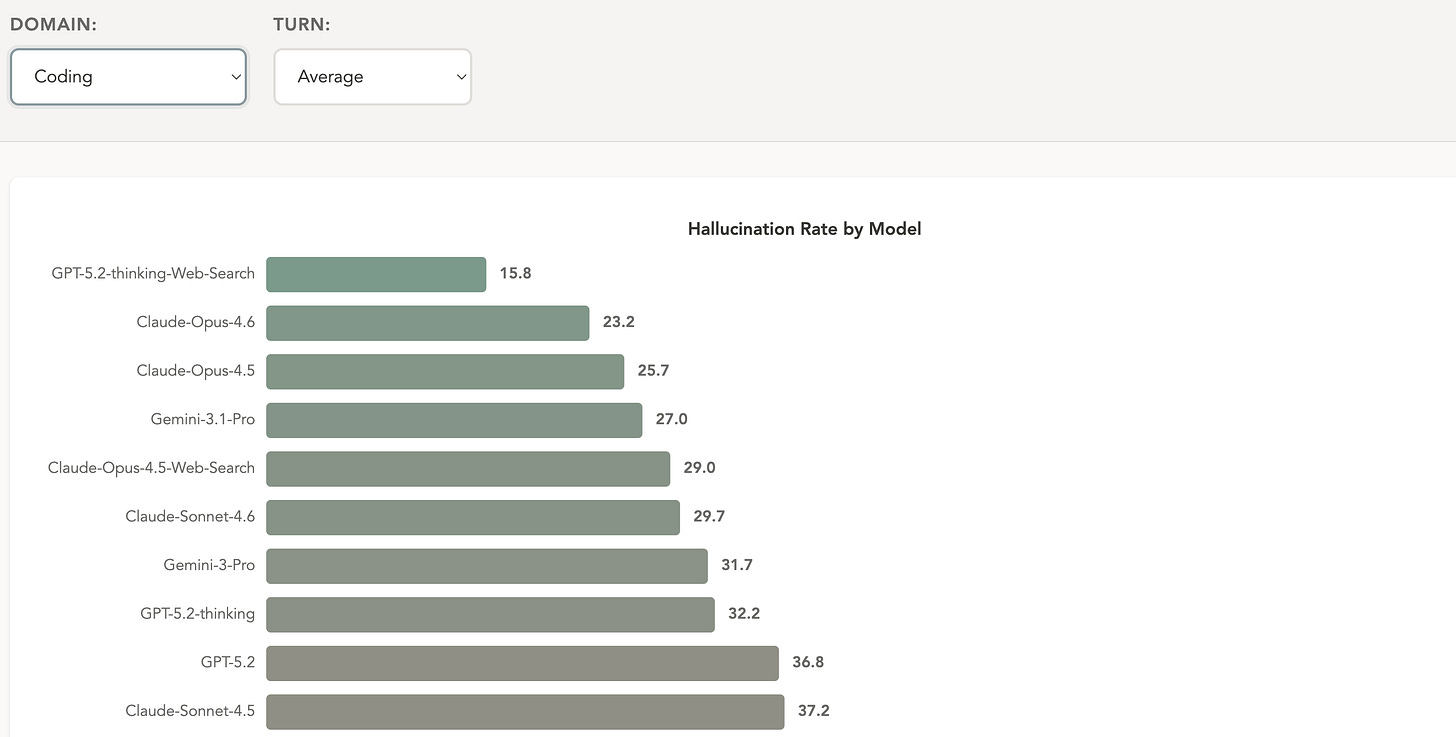

HalluHard is a benchmark focused on hallucination risk. It measures the percentage of model responses that include fabricated or incorrect claims, a direct gauge of reliability rather than capability.

The index breaks responses into individual factual claims and checks them against reliable sources, such as legal filings or medical literature, to see which ones are actually supported. Despite consistent improvements in model capability, I was surprised to see how high hallucination rates remain.

Even coding, the most reliable domain tested, still shows hallucination rates around 15%. Yet this hasn’t stopped vibecoding, or writing code with natural language, from taking off.

The more steps an AI model has to take, the more opportunities there are for hallucinations to creep in. Additional instructions, expanding context, and extended back-and-forth all increase the chances of invented details, misread sources, or claims that go beyond what the evidence supports. Early mistakes also tend to compound.

That’s why enterprise teams are less focused on whichever model is getting the most attention. Reliability matters more than raw capability, so the emphasis shifts to constraining how the model operates inside a regulated environment.

Many of the teams I’ve talked to are model agnostic, swapping models in and out of their systems depending on the task and cost. Most of the effort instead goes into guardrails: what the system is allowed to do, what it is not allowed to do, where it can pull data from, and when it needs to stop or hand off to a human.

Complicating matters further, there are virtually no established standards for how these systems should interact with each other.

Fayssal El Mofatiche, founder and CEO of Flowistic and a former senior investment engineer at Allianz, compares the current moment to the pre-TCP/IP internet, before common protocols made the Internet possible. As an example, he points to agents communicating in Markdown, which was designed to format text for humans, not to serve as a standardized protocol for machines like HTTP.

“There is a lot of software engineering that this technology has to go through in order to be more mature and more reliable in terms of its interactions,” said El Mofatiche, whose firm builds AI engineering and deployment solutions. “We are definitely going there, but we are still in very early stages.”

Research is emerging on creating the right scaffolding to improve consistency.

Takeaway

Consumers focus on the latest model and its capabilities. Inside enterprises, the work looks very different. Reliability comes from architecture that is custom-built for each organization, not from the model itself.

Related

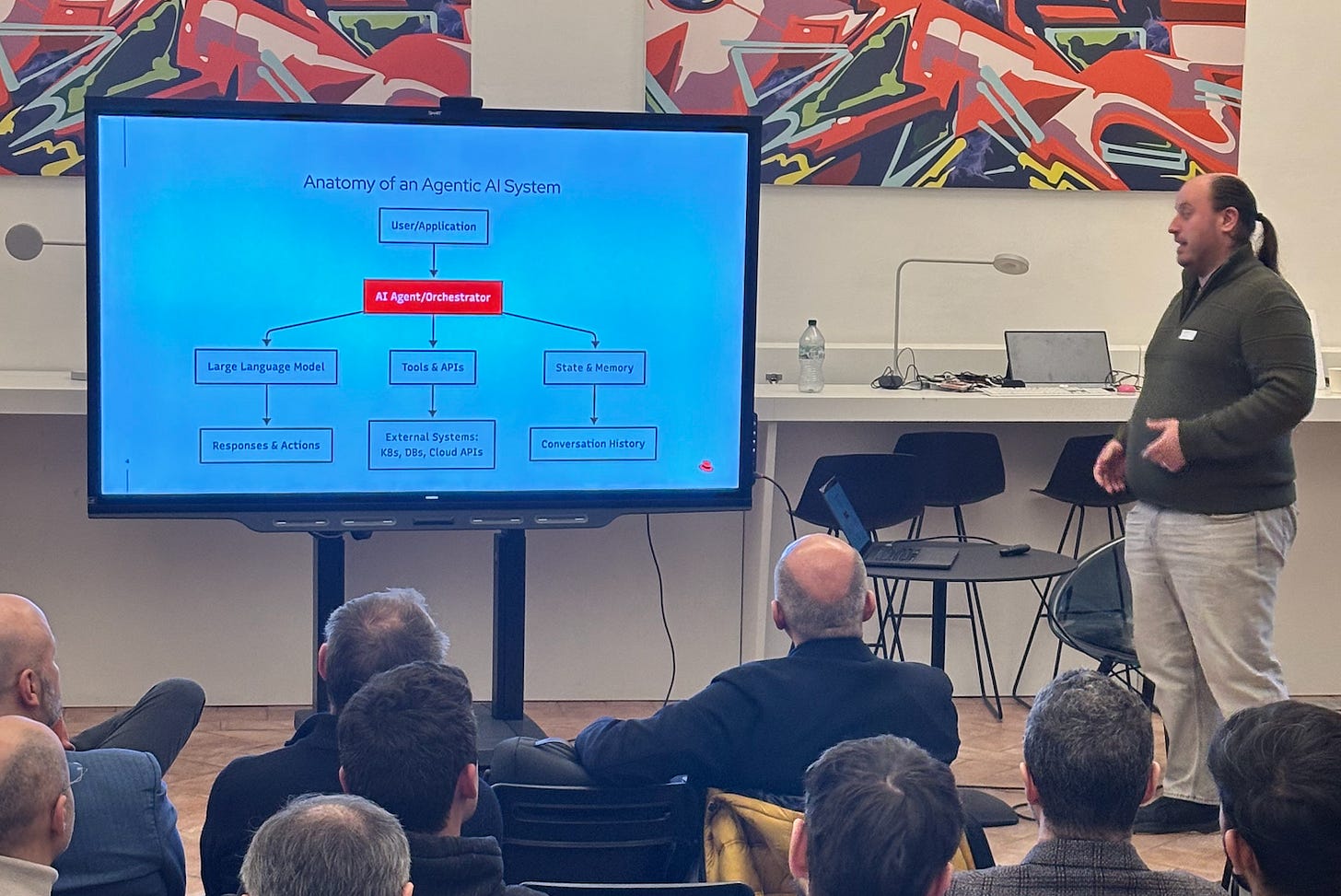

I attended AI Tinkerers Milan, an AI and finance meetup on Tuesday, and the vibe was… refreshingly practical — a nice break from the rocket emojis on the web. It was a well-done event because the conversations homed in on specifics.

Red Hat’s Daniele Zonca on engineerings guardrails for AI

RESEARCH

Why AI Struggles With Real Analyst Work

Due diligence requires evidence across firms and time, where current systems fail, according to new research.

AI looks impressive when you ask a narrow question about a single company filing, such as revenue last quarter.

Ask it to compare two firms’ risk disclosures or track strategy over several years, and performance drops fast. Fin-RATE, a new benchmark from researchers at Yale and Goldman Sachs, measures that gap and identifies where the model breaks.

Most financial benchmarks reduce SEC filings to lookup tasks: find a number in a 10-K and repeat it back accurately. That design misses how analysts actually work. Real due diligence requires synthesizing disclosures across companies, time periods, and filing types simultaneously. A pass/fail system doesn’t tell you whether errors came from retrieval, hallucination, or broken reasoning chains.

Here’s what they did:

Built a body of 15,311 document segments from 2,472 SEC filings (10-K, 10-Q, 8-K, DEF 14A, and others) covering 43 companies across 36 industries, 2020–2025. Sourced from EDGAR, segmented at official SEC item boundaries, converted to structured Markdown.

Designed three task types

Single-document questions

Cross-company comparisons

Multi-year analysis within one firm

Created 7,500 question-answer pairs with numbers manually verified against source filings.

Evaluated 17 models, including closed-source systems, major open-source models, and finance-tuned variants

Tested performance with passages provided directly versus retrieved using four RAG methods.

The findings:

Detailed results, author commentary, and real-world constraints are available to paid readers:

FEATURE

Can We Break Open AI’s Black Box?

The most surprising aspect of the AI boom is that even its creators can’t explain exactly how these models work.

Google’s DeepMind won the Nobel Prize in chemistry, yet the internal logic, or the “reasoning” that led to this breakthrough, remains a black box.

As one researcher told me: “It’s much more like growing a plant than building a building.”

I did a deep dive into interpretability that was published this week in the Chicago Booth Review, a publication of the University of Chicago Booth School of Business.

Researchers are moving from doing post-hoc detective work on finished models to trying to build interpretability into the training process from the start.

Access to the story is free.

NEWS

Fictional AI Future Sparks Market Rout

The markets tanked earlier this week over a fictional “2028 memo” that depicted AI wiping out jobs and triggering a broader economic downturn. The authors framed it as a thought exercise, but markets reacted as if the scenario were imminent.

The episode shows how poorly understood current AI capabilities still are. AI can “wow” in demos, but if you talk to anyone actually building in this space, real implementation is much harder.

AI systems still struggle with complex, multi-step reasoning and consistency outside tightly defined tasks. (As I went over in today’s research piece.)

Citadel Securities (!) put out a rebuttal.

IBM Drops the Most Since 2000

IBM got caught in the “AI-can-do-everything” narrative this week, with shares dropping 13%—its worst single-day plunge since the dot-com bubble burst in 2000.

The cause? Anthropic published a blog post suggesting AI could help automate modernization of COBOL, the 66-year-old programming language that still handles the majority of ATM transactions.

The market reacted as if this were a magic wand for legacy systems.

For context, I asked Sandra Nudelman, CEO of RecodeX, a company that specializes in transforming code from one language into another, including COBOL, how realistic the announcement is.

Her answer: AI can help, but it is not an automatic process.

AI can speed up understanding how old COBOL systems work, something that historically took years. But modernization timelines are dominated by verifying that the new system behaves exactly like the old one.

In regulated environments, every AI-generated artifact (documentation, dependency mapping, test scaffolding, translated code) requires careful human confirmation. That validation burden can materially offset the theoretical time savings. You may speed up drafting, but you still have to prove functional equivalence and regulatory correctness,” Nudelman said in an email.

JPMorgan’s $18 Billion Tech Spend Isn’t Shrinking Its Workforce

JPMorgan spends roughly $18–20 billion a year on technology, more than many competitors’ entire operating budgets. Yet the bank employs about the same number of people as it did a year ago.

Rather than triggering mass layoffs, AI and automation appear to be shifting work internally, reducing some back-office roles while expanding client-facing and revenue functions, according to CNBC.

From the story:

The bank’s head count was roughly unchanged at 318,512 over the past year, but there were changes below the surface: Operations and support staff fell by 4% and 2%, respectively, as the firm added 4% to roles that involve catering to clients and generating revenue.

It did that by using technology to boost the number of accounts that each operations employee can handle (up 6%), reducing the per-unit cost to deal with fraud (down 11%) and making their software engineers 10% more efficient, according to the bank’s presentation.

ROUNDUP

What Else I’m Reading

Bloomberg embeds agentic AI into the Terminal The Trade

Retail Trading Demand Hits Record in Early 2026, Up 25% From Prior Peak Trading View

JPMorgan will spend almost $20 billion on technology this year BI

Jamie Dimon Dismisses Fears Over How AI Will Hit JPMorgan WSJ

Top trading engineers are pursuing “generational wealth” at AI firms eFinancialCareers

Deutsche Bank, Goldman Look to AI to Flag Trader Misconduct BBG

Anthropic Links AI Agent With Tools for Investment Banking, HR BBG

CALENDAR

Upcoming AI + Finance Conferences

AI and Future of Finance Conference – Mar. 19–20 • Atlanta

Georgia Tech event featuring academic and industry leaders like the CEOs of Nasdaq and Snowflake.

QuantVision 2026: Fordham’s Quantitative Conference – Mar. 19–20 • NYC

An academic-meets-industry exploration of AI-driven alpha, multimodal alternative data, and systemic risk. (AI Street is sponsoring QuantVision. Great lineup of speakers!)

Future Alpha – Mar. 31–Apr. 1• NYC

Cross-asset investing summit focused on data-driven strategies, systematic investing, and tech stacks.AI in Finance Summit NY – Apr. 15–16 • NYC

The latest developments and applications of AI in the financial industry.

Momentum AI New York – Apr. 27–28 • NYC

Senior-leader forum on AI implementation across financial services, from operating models to governance and execution.AI in Financial Services – May 14 • Chicago

Practitioner-heavy conference on building, scaling, and governing AI in regulated financial institutions.AI & RegTech for Financial Services & Insurance – May 20–21 • NYC

Covers AI, regulatory technology, and compliance in finance and insurance.

What’s your favorite story from this week?

Do me a favor and hit reply with the number of your favorite story from today:

Why the Model Matters Less Than the System Around It

Why AI Struggles With Real Analyst Work

Can We Break Open AI’s Black Box?

News Roundup: Fictional AI Future Sparks Market Rout