Man Group Details its AlphaGPT

Hey, it’s Matt. This week on AI Street:

🤖 Man Group Details its AlphaGPT

🧰 AI Struggles with Deductive Logic

🤠 News Roundup: Adoption Trends

NEXT WEEK

AI Street will be out on Wednesday instead of Thursday because of Thanksgiving 🦃

ANALYSIS

Hedge Funds & AI Frameworks

Over the last year, we've seen well-known hedge funds talk publicly about how AI is impacting returns — at least in broad strokes. Here are a couple:

In March, Bridgewater Associates CEO Nir Bar Dea said its $2 billion AI fund is generating "unique alpha that is uncorrelated to what our humans do."

In July, Man Group’s quant equity unit said it’s using an internal tool, called AlphaGPT, to generate, code and backtest trading ideas, mimicking how researchers develop new trading signals.

Both hedge funds recently published research about using LLMs in investing.

AI in investing looks less like a single model answering questions and more like a small organization. Coming up with trade ideas is broken up into roles: one agent handles search, another interprets the information, another challenges the reasoning, and a final agent signs off. That structure ends up behaving a lot like a real firm, a group of teams.

Man Group on What AI Can (and Can't Yet) Do for Alpha

Man says the technology helps address a growing challenge in quantitative investing: the surge of available data and possible market relationships that outstrip human bandwidth. Early tests show the system can identify connections that researchers may overlook and produce a broader set of ideas. The big picture: AI gives them scale to test more ideas.

The hedge fund has built a workflow that mirrors a quant research pod:

Idea generator that proposes hypotheses at scale

Code implementer that writes production-grade Python against their internal research stack

Evaluator that performs significance, risk and economic-sense checks

The firm also highlights risks. More testing increases the odds of finding bogus signals. Man says it uses safeguards such as prompt controls, validation checks and human review to limit errors and prevent hallucinations or p-hacking, getting a result you want by chance.

The system has been most effective so far in equity research, though Man says its modular design should allow it to expand to other asset classes. The company expects similar AI tools to spread across the industry but argues its long-built data, infrastructure and research processes will remain a competitive advantage.

“To date, the system has produced signals that meet our standards and pass the same evaluation thresholds required for human-generated research,” Man Numeric’s Ziang Fang, CFA, Senior Portfolio Manager, said in the post.

Bridgewater’s AIA Forecaster

The firm released research on an AI forecasting system, which also uses a multi-agent framework rather than rely on a single model. One agent runs adaptive search across curated news sources, others generate and debate forecasts, and a supervisor agent resolves disagreements before a final statistical calibration step. In tests on standard forecasting benchmarks, the system matched human superforecasters.

I plan to do a deeper dive on this paper in the coming weeks but, unlike AI that can work 24/7, I cannot.

Takeaway

Taken together, these projects point in the same direction. AI in investing is less of a single model and more of a framework. Hedge funds are tapping AI’s pattern-matching firepower and applying it to their investment strategies with bespoke agents.

Further Reading

What AI Can (and Can't Yet) Do for Alpha | Man Numeric

AIA Forecaster: Technical Report | Bridgewater AIA Labs

RESEARCH

AI Struggles with Logic

LLMs Rely on Memory, Not Deductive Reasoning

It’s hard to test a technology that’s been trained on the Internet. Research shows that LLMs can “remember” training data even if the model has only seen it a handful of times.

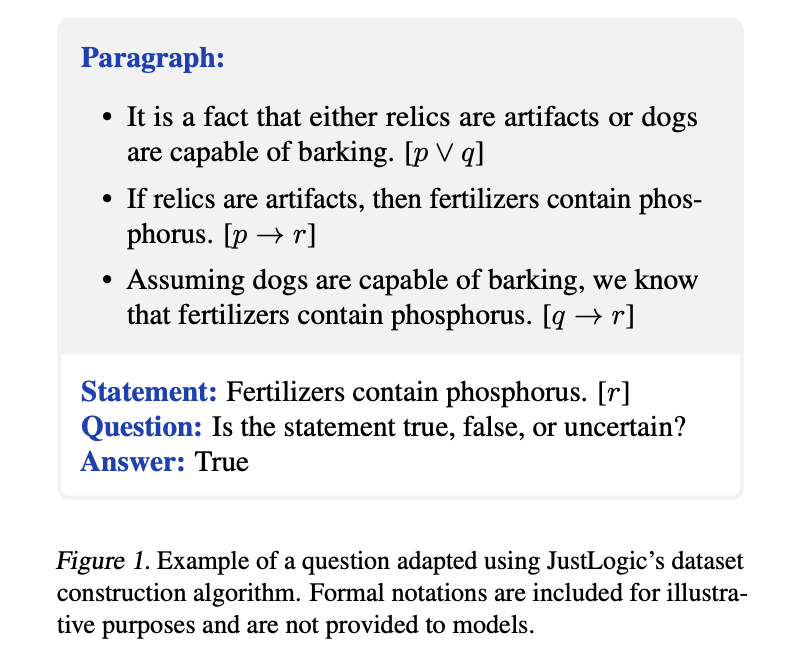

To get around this, researchers came up with a creative solution: a nonsensical but logically sound test. In other words, it’s a logic test not based on actual facts.

Because the arguments do not match real-world facts, the model cannot rely on what it’s seen in its training data.

It must rely on the formal logic of the premises. Here’s an example question:

What was tested

The study evaluated a range of models from OpenAI, Meta, DeepSeek, and Alibaba, covering both reasoning-focused systems and general instruction-tuned models to compare their deductive reasoning performance.

Results

DeepSeek R1 performs best at 80.9%, beating the human average but still well below the ceiling.

OpenAI o1 scores 72.9%, roughly matching typical human performance.

GPT-4o, Llama 3, and other non-reasoning models fall lower, often in the 50 to 65% range.

Humans (18 online volunteers) scored 73% with some participants reaching 100%.

Models do well when the argument requires only one to three steps, but their accuracy drops quickly as the reasoning becomes deeper. DeepSeek R1 holds up better on these harder problems, while smaller reasoning models such as o1-mini eventually perform about as poorly as models that were not trained for reasoning.

The clearest performance gains come from teaching models to walk through problems step by step and from prompting them to lay out their thinking in detail. These changes make a much bigger difference than simply building a larger model, which tends to produce only small improvements on its own.

The researchers note that human performance is likely higher than the measured average, because some participants appeared to skim through the hardest questions instead of solving them carefully.

These gaps in formal logic may not matter as much as they sound. LLMs are trained on real facts and human-written reasoning, so they can often shortcut to the correct answer by exploiting statistical regularities in the data instead of working through a symbolic proof.

That tension is what led me to this paper in the first place: after seeing ChatGPT repeatedly describe what it was doing as “thinking,” I wanted to know what that actually means.

“We definitely shouldn’t overhype LLMs: they are still lacking/different in many areas,” said Michael Chen, the paper’s lead author, told me in an email. “I also don’t think we have settled on a good definition for ‘thinking’ and ‘reasoning’ with respect to LLMs.”

Takeaway

AI’s answers sound so compelling that it seems like genuine reasoning, yet the process appears to be closer to reciting. If you define reasoning as using formal or deductive logic, AI struggles.

Further Reading

JustLogic: A Comprehensive Benchmark for Evaluating Deductive Reasoning in Large Language Models | arXiv

NEWS

AI on Wall Street News

AI Adoption

How Small Businesses are Using AI

There’s lots of chatter about AI leading to widespread job losses, but there’s not much data to back that up. The WSJ wrote this week how small business owners are using the tech for tedious/ junior-level type tasks. Here’s a rundown of examples from the story:

Automated customer service

AI reads incoming emails, interprets customer needs, drafts replies, and sends most messages without human review.

Marketing content generation

AI produces blog posts, social content, and short promotional videos from material like emails or podcast recordings.

Business performance synthesis

AI turns internal data into an audio briefing or podcast that highlights trends and suggests operational improvements.

Website and software updates

AI writes custom code and explains where to place it, allowing small firms to update sites without hiring developers.

AI Is (Finally) Showing Up in Earnings: BBG Opinion

Evidence of AI use cases that increase worker productivity and make the technology indispensable is starting to pop up in earnings reports, with companies such as Trane Technologies and C.H. Robinson Worldwide Inc. deploying AI tools. Bloomberg

AI Regulation

Trump Urges Congress to Block State-Level AI Regulation

President Donald Trump called on Congress to pass a federal standard governing oversight of artificial intelligence and warned that varied regulation at the state level risked slowing the development of an emerging technology that’s critical to the US economy. Bloomberg

Macron Says Germany, France Seek to Delay AI Rules

Germany and France want to delay by a year provisions of the European Union’s AI Act that would regulate high-risk artificial intelligence systems, according to French President Emmanuel Macron. Bloomberg

SPONSORSHIPS

Reach Wall Street’s AI Decision-Makers

Advertise on AI Street to reach a highly engaged audience of decision-makers at firms including JPMorgan, Citadel, BlackRock, Skadden, McKinsey, and more. Sponsorships are reserved for companies in AI, markets, and finance. Email me (Matt@ai-street.co) for more details.

ROUNDUP

What Else I’m Reading

AI in Asset Management: Tools, Applications, and Frontiers | CFA

Gartner Survey Shows Finance AI Adoption Steady in 2025 | PR

BofA says AI is boosting bankers' productivity, revenue | Yahoo

The Bank of America Family Office Study | BofA

Wells’ CEO: It’s Time to Be Honest About AI and Headcount | Financial Brand

CALENDAR

Upcoming AI + Finance Conferences

AI for Finance – November 24–26, 2025 • Paris

Artefact’s AI for Finance summit, focused on generative AI, future of finance, digital sovereignty, and regulation

NeurIPS Workshop: Generative AI in Finance – Dec. 6/7 • San Diego One-day academic workshop at NeurIPS focused on generative AI applications in finance, organized by ML researchers.

The AI Summit - Dec. 10/11 • New York

An enterprise-AI summit bringing together business leaders, technologists and vendors.