Hey, it’s Matt. You’re reading AI Street, where I report on how Wall Street uses AI.

I’ve found myself searching through AI Street archives to pull together what I’ve reported on how hedge funds and market makers use AI. So, I created a running tracker that puts it in one place, combining news stories, regulatory filings, and some of my own reporting.

(If you think I should do the same for private equity firms or sovereign wealth funds, let me know. There is, in fact, a human behind the text you’re reading.)

I think of hedge funds and market makers as using AI in two main ways. I’ve put them in the same broad category because, as the FT has written, the two are converging.

The first way is straightforward: using large language models from frontier labs for familiar tasks like summarizing documents and surfacing ideas, plus more sophisticated workflows such as Man Group’s use of AI to generate and test trading signals.

A NOTE FROM OUR SPONSOR

When your agent reads Bloomberg or Reuters, is it finding an edge?

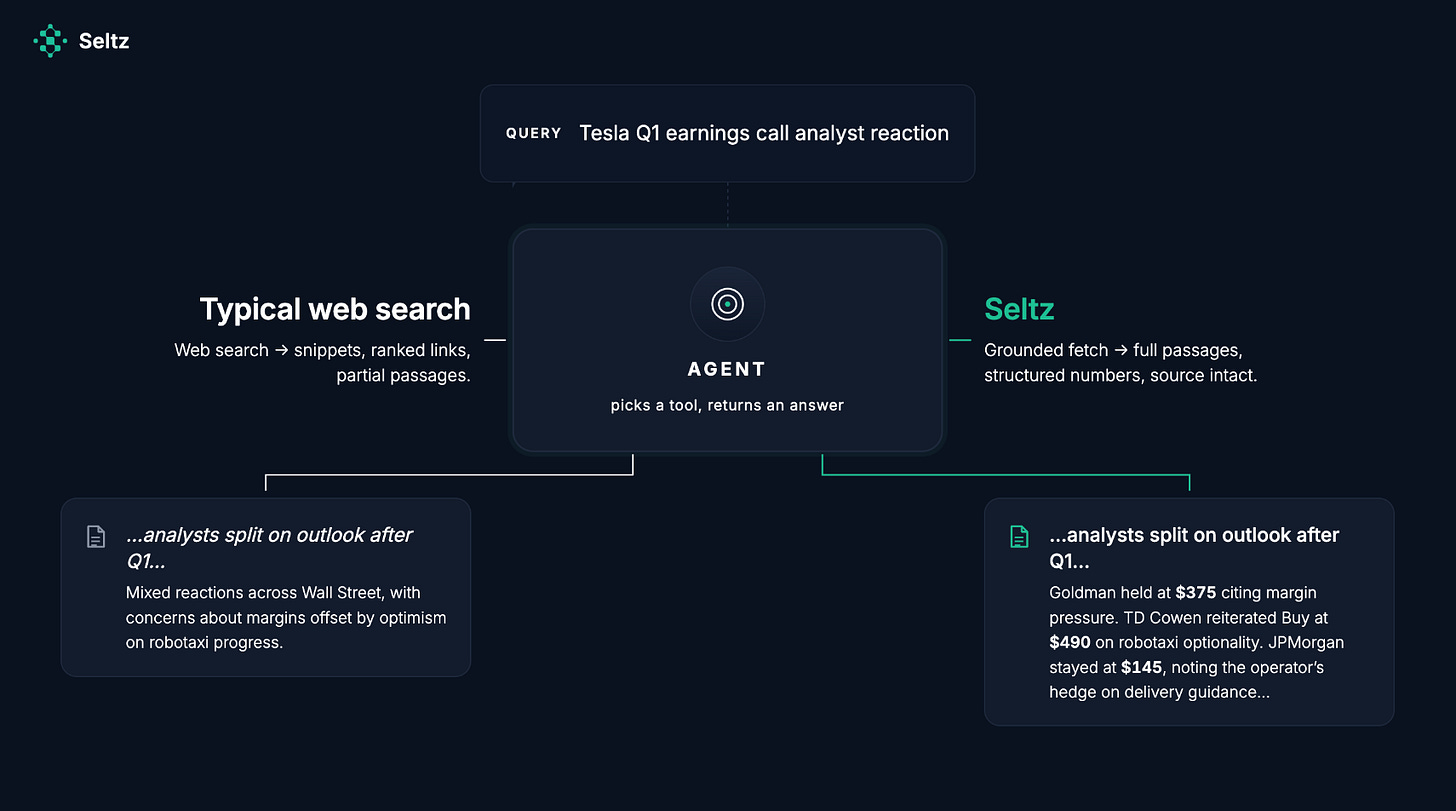

On Tesla’s Q1 earnings, Goldman held at $375, TD Cowen reiterated Buy at $490, JPMorgan stayed at $145. You and your competitors are reading the same call.

The dispersion across sell-side targets. The reasoning behind each one. The hedge buried in the fifth paragraph of an operator quote.

That’s where the analytical signal is. It lives in the paragraphs your agent isn’t getting.

Typical retrieval looks fine. The agent doesn’t know what it’s missing, and neither do you.

Seltz returns full context in hundreds of milliseconds, every result traceable to source. Built for workflows where deep research matters more than the headline.

If you’re running agents on financial news, Seltz will run an eval on your setup.

Email CEO Antonio Mallia at antonio@seltz.ai or ask me for an introduction.

GPU as Edge

The second way funds are using AI is training their own models on financial data. This requires orders of magnitude more computing power – on par with frontier labs like OpenAI and Anthropic – but as HRT’s Marc Khoury said at an industry conference last year, larger models trained on more data keep improving.

There’s a growing body of research that suggests that in each field, be it weather, payments, or market events, there may be an underlying “language” in domains like weather, payments and market microstructure that transformers are unusually good at idenifying.

In other words, if you take a massive amount of weather data, train a transformer model on it, you generally get better results than previous state-of-the-art models. I’m not suggesting that transformer are oracles, but I think it is important to highlight how fundamental a shift this is.

We’ve gone from a world where humans design models with top-down deductive logic. That approach is being surpassed by bottom-up, pattern-matching, inductive logic. And the power of these models shows no signs of abating.

Which raises the question: do the firms with the most compute and the most data win?

And as with all of these massive, billion-parameter models, no one knows how they really work on the inside. These models are grown, not built, as Anthropic CEO Dario Amodei is fond of saying.

This is a longer preamble than I anticipated, but I hope it gives you a sense of the landscape.

Man Group

AI is helping the world’s largest listed hedge fund find new investment strategies.

Man Group’s quant equity unit uses an internal tool, called AlphaGPT, to generate, code and backtest trading ideas, mimicking how researchers develop new trading signals. AI in investing here looks less like a single model answering questions and more like a small organization. For trade ideas, AlphaGPT uses a workflow that proposes signals, writes code, runs backtests, and then sends the output into Man’s standard human review process.

The big picture: AI gives them scale to test more ideas. Man Group announced a partnership with Anthropic in February to use Claude and work with Anthropic engineers on AI applications across the firm, with alpha generation as the primary focus.

I spoke with Ziang Fang, Senior Portfolio Manager at Man Numeric, in December about how the system works in practice. The core problem AlphaGPT is solving: there’s been an explosion in data availability, and no one can realistically go through thousands of alternative datasets, many of which are unstructured. The system processes that volume and proposes hypotheses. Humans validate them.

“If you didn’t know whether a signal came from AI or a human, you probably couldn’t tell. The main difference is formatting. The AI output is more consistent,” Fang told me.

“The system has produced signals that meet our standards and pass the same evaluation thresholds required for human-generated research,” Fang wrote in November.

“Along the way we ran into a lot of issues — hallucination, lookahead bias, multiple testing, and many other things,” Fang said. The firm uses prompt controls, validation checks, and human review to limit errors and prevent p-hacking.

Hudson River Trading

Hudson River Trading is building foundation-style models trained on decades of global market data, applying techniques similar to those used in frontier language models for automated trading.

The firm is training these models on more than two decades of data spanning equities, futures, and cryptocurrencies, totaling over 100 terabytes. That translates into “something like trillions of tokens, in the same realm as what you train frontier language models on,” said Marc Khoury, an algorithm developer at HRT, speaking at an academic conference last summer.

HRT’s goal is to model markets as sequences of interactions. Much of the predictive signal lies in how order book events evolve over time, especially during fast-moving conditions. “As I increase the model size, the model continues to improve,” Khoury said — the same scaling pattern seen in large language models.

HRT is responsible for about 10% of all US equity volume. HRT’s AI Labs team, HAIL, says deep learning is core to the firm’s trading, and that HRT has spent more than a decade integrating AI research and infrastructure into its trading strategies. HRT is a proprietary trading firm and doesn’t file an ADV, so there’s nothing to cross-reference on the regulatory side.

And to my earlier aside about whether the firms with the most compute and data outperform their competitors — here’s HRT’s data center inside a mountain:

Bridgewater

In March 2025, Bridgewater CEO Nir Bar Dea said its $2 billion AI fund is generating “unique alpha uncorrelated to what our humans do” at a Bloomberg conference. The fund is delivering returns “comparable” to the firm’s human-led strategies, Bar Dea said. No specific figures were disclosed.

The fund is run by Co-CIO Greg Jensen and uses Bridgewater’s proprietary technology with models from OpenAI, Anthropic, and Perplexity. Bridgewater formed its Artificial Investment Associate (AIA) Labs division in 2023. The AIA serves as the primary decision-maker in the fund, while human professionals oversee risk management, data acquisition, and trade execution.

In its ADV, Bridgewater describes AI and machine learning as examples of “new sources of alpha” the firm is developing. It’s a description of Bridgewater’s strategy and capabilities, not a disclosure of realized fund performance.. It’s a description of what Bridgewater is building toward, not what it’s delivered. Bar Dea’s claim at Bloomberg is the stronger statement, and even that came without figures.

Jane Street

Jane Street says deep learning is “the future of quantitative trading.” The firm, which reported record trading revenue in 2025, builds neural-network models that drive its trading strategies, along with the infrastructure needed for training and inference.

Jane Street says it has tens of thousands of high-end GPUs, more than 1 exabyte of current storage, and about $400 billion in daily filled dollars. It trades on more than 200 electronic exchanges and venues, making it one of the world’s largest market makers.

Last month, Jane Street committed about $6 billion to use CoreWeave’s AI cloud platform and made a separate $1 billion equity investment in the company. The agreement gives Jane Street access to next-generation compute across multiple facilities, including Nvidia’s Vera Rubin technology.

CoreWeave said Jane Street has relied on its infrastructure since 2024 to train and scale proprietary models. Jane Street’s head of quant research, Craig Falls, said CoreWeave provides the GPU infrastructure and technical support needed for the firm’s machine-learning and research workloads.

Bloomberg reported that Jane Street generated $39.6 billion in trading revenue in 2025, surpassing major Wall Street banks with only about 3,500 employees. That doesn’t prove AI or compute caused the trading haul. But it does show why access to GPUs, storage, and low-latency model infrastructure has become strategic.

More firms detailed below, including Citadel, XTX, AQR, Viking and Millennium.