Can AI Simulate How Investors Trade News?

AI-generated investor personas measure disagreement in real time.

Researchers built 216 simulated investors, fed them 15 years of stock market news, and found that when those investors disagree, real trading volume tends to rise.

Most research on AI and financial news asks whether a headline is positive, negative, or neutral. This paper, the Market’s Mirror, asks something different: how do different investors interpret the same headline, and where do those interpretations diverge? The answer, it turns out, is demographic.

The results surprised even the authors.

"What surprised us most was that political differences generate the most disagreement in our data, but they're relatively weak at predicting actual trading volume,” Marina Niessner, one of the paper’s coauthors and a professor at Indiana University, said in an email. “One explanation is that people voice strong political opinions about firm news without backing them with capital, which echoes earlier evidence that beliefs and portfolios can diverge."

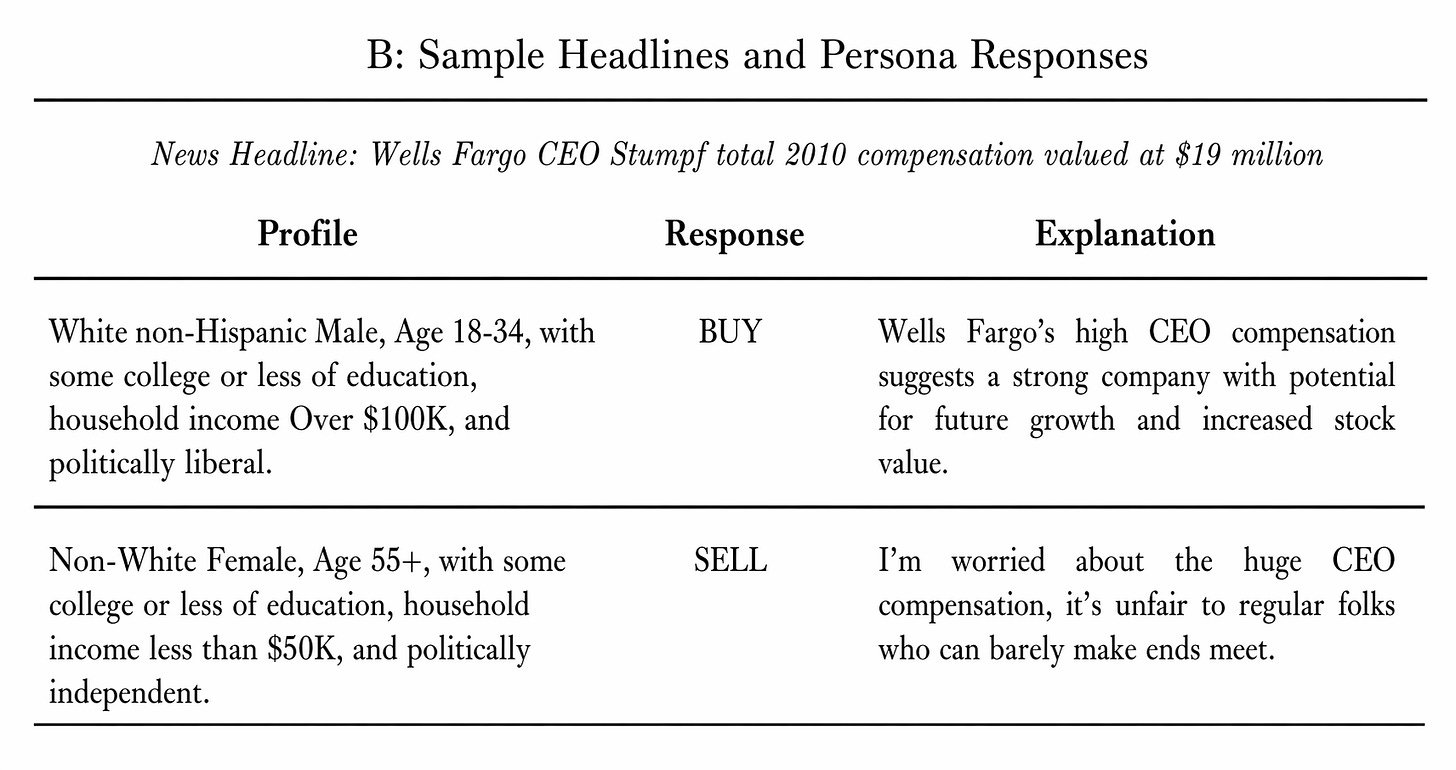

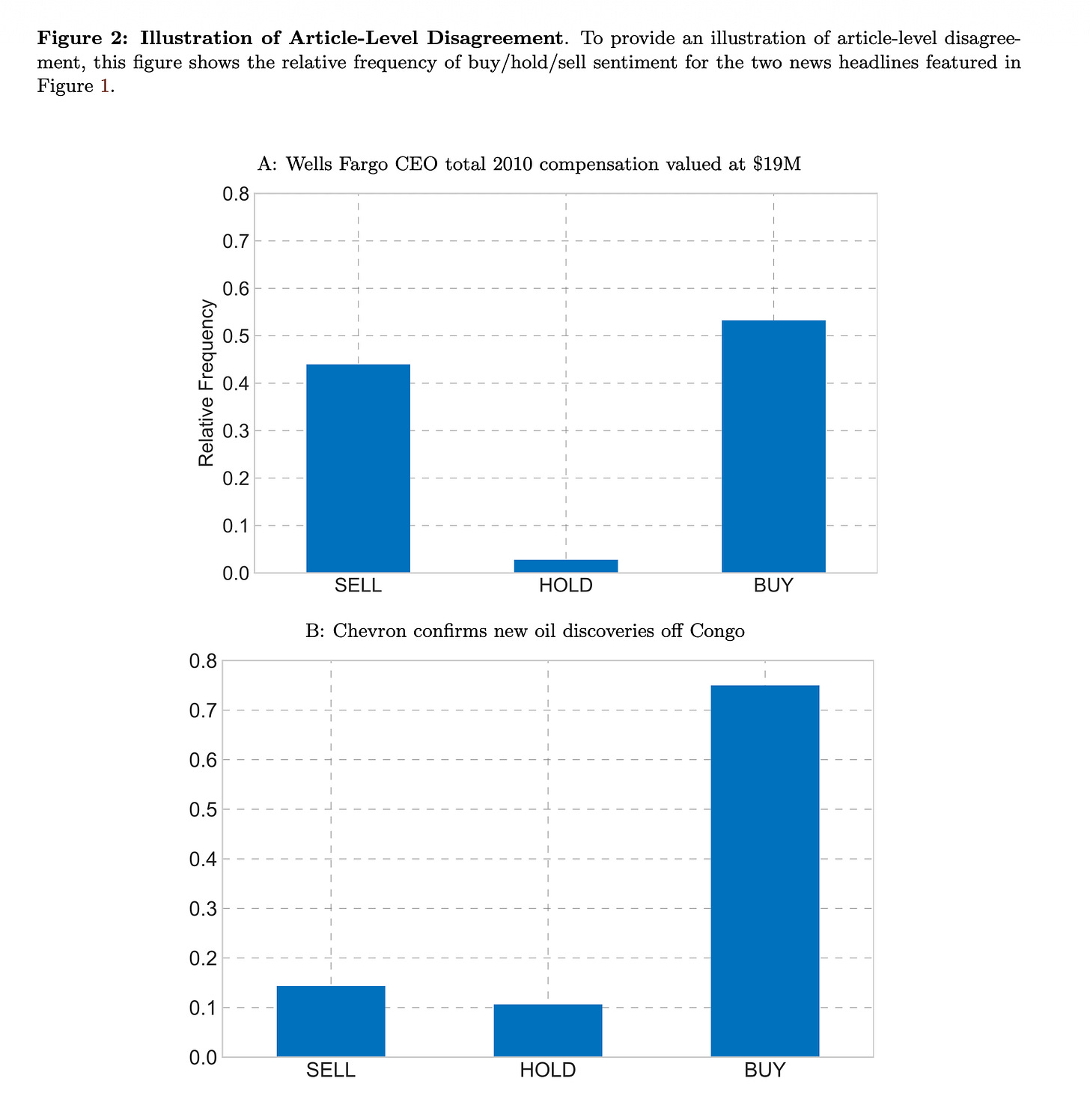

When a company announces a $19 million CEO pay package, a high-income liberal investor reads a signal of corporate strength. A low-income woman over 55 reads a reason to sell — “it’s unfair to regular folks who can barely make ends meet,” in one example from the paper.

The problem with existing disagreement measures is frequency and specificity. Well-known investor expectation surveys run every six weeks at best. Analyst forecast dispersion covers only firms with analyst coverage. Social media skews toward a narrow demographic slice. None can tell you, for a specific headline about a specific company on a specific day, which investor groups disagree and why.

Here’s what they did:

Persona construction. They crossed six demographic attributes drawn from FINRA’s 2021 National Financial Capability Study — age (18–34, 35–54, 55+), gender, race, income (<$50K, $50–100K, >$100K), education, and political orientation (conservative, independent, liberal) — producing 216 distinct investor profiles. Each profile seeds Llama 3.1 8B, an open-source language model run locally. The prompt instructs the model to adopt the persona’s worldview, then asks: given this headline about this company, would you buy, hold, or sell?

Scale. 5.5 million RavenPack headlines for S&P 500 firms from January 2010 through April 2025, covering 1,070 firms. Each headline paired with each of the 216 personas gives 1.188 billion total elicitations. Each prompt took approximately 1.2 seconds on four Nvidia Tesla V100 GPUs. Using the larger 70B version of the same model would have taken roughly 14× longer — a computational cost difference of approximately 219,000 GPU-days. The 8B choice was driven by both cost and practicality.

Measuring disagreement. For each headline, disagreement is the weighted standard deviation of buy/hold/sell responses across all 216 personas, with weights matching the actual demographic distribution of U.S. retail investors from the FINRA survey. They also computed dimension-specific disagreement by isolating variation along each of the six demographic axes separately.

Validation against human benchmarks. Two out-of-sample checks, both using surveys published after the model's December 2023 training cutoff. In the first, personas were asked to rank ten corporate actions by moral wrongfulness — the same task given to a representative sample of U.S. adults in a separate study. They agreed: layoffs and CEO pay increases most objectionable, share buybacks least. In the second, demographics alone — no individual survey history — reproduced known behavioral biases with 64.87% accuracy, versus 59.16% for random guessing.

Trading tests. They tested whether days with more disagreement among personas led to more trading, after accounting for the usual suspects — recent returns, volatility, media sentiment, and social media disagreement. They ran the same test on data from before and after the model's training cutoff to check that the results weren't an artifact of what the model had already seen.